The 10-Second Death Zone: How a Hardcoded Threshold Was Killing 11 Trades a Day

Our live Polymarket trading bot was scoring 11 high-conviction signals per day (avg score: 83.3) and blocking every single one. The culprit: a 10-second timing threshold we'd never questioned. Here's what production data taught us about hardcoded constants.

The bot went live on mainnet in February 2026. Real money. Real markets. Real stakes.

For the first week, everything looked fine on the surface. The system was running, signals were being evaluated, and some trades were executing. But the win rate felt softer than our backtests projected. The position sizing looked right. The signal logic hadn't changed. Something else was off — and it was hiding in plain sight.

Setting the Scene

We're running a momentum-based trading system on Polymarket, the prediction markets platform built on Polygon. The bot evaluates binary outcomes — "Will BTC close above $X?" — in ultra-short timeframes: 5-minute and 15-minute windows. When a signal scores high enough, the bot submits a TAKER order to the CLOB (Central Limit Order Book) and waits for fill confirmation.

The system has safeguards layered throughout: position limits, conviction thresholds, and timing checks. One of those timing checks exists for a very sensible reason — you don't want to submit an order for a market that's about to close. If there's less than 10 seconds until resolution, skip it. Let it go. There'll be another signal.

That's the logic. Written once, committed, and never revisited after we went live.

Here's what we didn't know: that 10-second window was a death zone.

The Discovery

After about a week of live trading, we pulled the signal logs and ran an analysis on what the bot was seeing versus what it was actually trading. The numbers that came back stopped me cold.

Eleven signals per day. Every single day. All of them scoring 83.3 average on our conviction model — well above the 80-point floor we'd set as our minimum threshold for live trading. These weren't marginal calls. These were the system's best reads.

And we were killing every one of them.

The signals were hitting in that narrow window between 7 and 10 seconds before market close — above our "too late" floor, but below our "safe to trade" ceiling. The timing check code was working exactly as written. That was the problem.

# strategy.py — the guard that was eating our best signals

if time_to_close < 10.0:

logger.debug(f"Skipping signal — too close to close: {time_to_close:.1f}s")

return None

Clean. Readable. Completely wrong for our actual execution environment.

Why 10 Seconds Seemed Safe

When we wrote that threshold, it felt conservative in a responsible way. Ten seconds seems like a lot of time to a human. It's an eternity for a microservice. It was meant to be a buffer — a margin of safety between signal evaluation and market resolution.

The thinking was: if something goes wrong mid-submission, we don't want to be half-filled when the market closes. Better to pass. The next window opens in five minutes anyway.

That thinking was sound in the abstract. It was wrong in practice because it was based on zero empirical data about our actual execution environment. It was an assumption dressed up as a safety measure.

The Actual Data

TAKER orders on Polygon confirm in approximately 2 seconds.Not 10. Not 7. Two.

The Polygon PoS chain has ~2-second block times, and TAKER orders — by definition — match against existing liquidity in the book rather than waiting for a maker to respond. When you submit a TAKER order to the Polymarket CLOB, you're filling against resting orders. The execution path is: submission → mempool inclusion → block confirmation. On Polygon, that's roughly 2 seconds under normal conditions.

More importantly: the CLOB accepts orders until the final second before market resolution. Not until 10 seconds out. Not until 7 seconds out. Until the last second.

So our math looked like this:

| Scenario | Time to Close | Actual Execution Time | Verdict |

|---|---|---|---|

| Old threshold | 10s | ~2s | 8 seconds of wasted buffer |

| New threshold | 7s | ~2s | 5 seconds of buffer (safe) |

| True floor | 3s | ~2s | 1 second of buffer (risky) |

We were burning 8 seconds of buffer that we didn't need. The "safe" window we'd defined was nearly 5x wider than reality required.

The Math on What We Left Behind

Let's be direct about what this cost.

Eleven signals per day at an average conviction score of 83.3. Our average bet size on high-conviction signals is approximately $5. That's $55/day in unrealized opportunity — just from timing. Not from bad predictions. Not from market conditions. From a constant we wrote once and never challenged.

Across a 30-day month, that's $1,650 in potential edge that never touched the book.

Now, not every signal wins. Nothing does. But the point isn't the dollar figure — it's the signal quality. These were the system's best reads, not its marginal ones. If you're going to have a bug, having it suppress your highest-conviction signals is the worst possible version of that bug.

The Fix

The change itself took about two minutes once we understood the problem:

# strategy.py — reduced from 10s to 7s

if time_to_close < 7.0:

logger.debug(f"Skipping signal — too close to close: {time_to_close:.1f}s")

return None

Same change in momentum_strategy.py. Committed, tested, deployed.

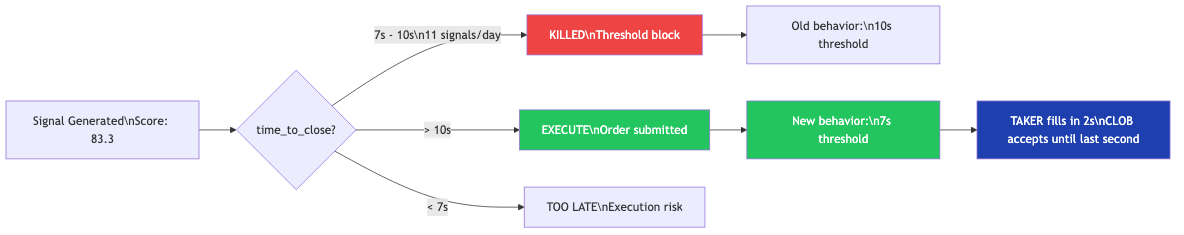

The diagram below shows the execution window before and after:

The green path — what used to require >10 seconds — now requires >7 seconds. The red zone (the death zone) collapsed from a 3-second band to nothing. Signals scoring 83+ that used to die now execute.

7 seconds gives us 5 seconds of buffer beyond confirmed TAKER execution time. That covers network jitter, rate limiting, and any edge-case latency spike without surrendering our best trades.

The Broader Lesson: Hardcoded Constants Are Invisible Bugs

This isn't really a story about milliseconds. It's a story about a category of bug that's almost impossible to see on paper — and surprisingly common in production systems.

Hardcoded constants feel like engineering maturity. They feel like someone thought carefully about a number, committed it, and moved on. They look like decisions. They read like safety.

What they actually are: assumptions that stopped being questioned the moment they were written down.

The 10-second threshold was never validated against real execution data because at the time we wrote it, we didn't have real execution data. We were pre-mainnet. The number came from first principles and intuition. That's fine — you have to start somewhere. The mistake was treating the initial estimate as permanent.

Production data changes the game. The moment you have real latency numbers, real confirmation times, real fill rates — every hardcoded constant in your system becomes a candidate for review. Most of them will be fine. A few of them will be quietly costing you every day.

The terrifying part is that the system won't tell you. The logs showed "skipped signal." Everything looked nominal. The bot was working correctly. The bug was in the definition of "correct."

What to Review in Your Own Systems

If you run any production system with timing thresholds, execution windows, or latency-based guards, here's what this story suggests you revisit:

1. Validate constants against actual performance data. What does your P95 execution time actually look like? Is your safety margin based on measurements or estimates?

2. Instrument the kill paths. Log every time a signal, request, or operation is blocked by a threshold. If that number is non-zero and consistent, investigate. "Skipped" is often code for "missed."

3. Separate "safe" from "conservative." 10 seconds felt safe. 7 seconds is safe. The difference between them was 11 trades per day. Safe doesn't mean maximally cautious — it means appropriately calibrated.

4. Treat initial thresholds as hypotheses. The first time you set a constant, you're making a guess. Label it as such in the code. Add a comment that says "validate against production data." Then actually do it.

# INITIAL ESTIMATE — validate against actual TAKER fill times in production

# Polygon PoS: ~2s block time; CLOB accepts until T-1s

# Revisit if fill latency changes or network conditions shift

EXECUTION_BUFFER_SECONDS = 7.0

That comment is free. The discipline it encodes is not.

The Signal This Sends

What production taught us about this threshold will teach us more things about other constants. That's the pattern. You write systems with estimates. Production replaces estimates with data. The engineers who close the loop between those two things build systems that compound in quality over time. The ones who don't keep running systems with invisible assumptions baked in.

We caught this one at a week. Some systems run for years with a 10-second death zone no one ever questioned.

Go check your thresholds. Look at your kill paths. Find the log lines that say "skipped" and ask why. The signal you're suppressing might be the one you most needed to hear.

Explore the Invictus Labs Ecosystem

Follow the Signal

If this was useful, follow along. Daily intelligence across AI, crypto, and strategy — before the mainstream catches on.

Foresight v5.0: How I Rebuilt a Prediction Market Bot Around Candle Boundaries

The bot was right. The timing was wrong. v4.x had a fundamental reactive architecture problem — by the time signals scored, the CLOB asks were too expensive. v5.0 solved it with event-driven candle boundaries and predictive early-window scoring.

Hermes: A Political Oracle That Bets on Polymarket Using AI News Intelligence

Political prediction markets don't move on charts — they move on information. Hermes is a Python bot that scores political markets using Grok sentiment, Perplexity probability estimation, and calibration consensus from Metaculus and Manifold. Here's how it works.

Leverage: Porting the Foresight Signal Stack to Crypto Perpetuals

The signal stack I built for prediction markets turns out to work on perpetual futures — with modifications. Here's how a 9-factor scoring engine, conviction-scaled leverage, and six independent risk gates become a perps trading system.